Picture a radiologist in a bustling hospital, reviewing dozens of X-rays each day. Now imagine software that flags potential abnormalities before she’s even opened the image—prioritizing cases by urgency. That’s not science fiction; it’s machine learning at work in healthcare today. Machine Learning models power these systems, and understanding them matters more than ever as AI reshapes every sector from finance to entertainment.

The Core of Machine Learning Models: What They Are and Why They Matter

Machine learning models are mathematical constructs that detect patterns in data, ranging from simple linear regression to complex neural networks. While these algorithms have existed for decades, advances in compute power and data availability have accelerated adoption. The global AI market reached $196.63 billion in 2023 and is projected to grow at a 37.3% CAGR through 2030, according to Grand View Research.

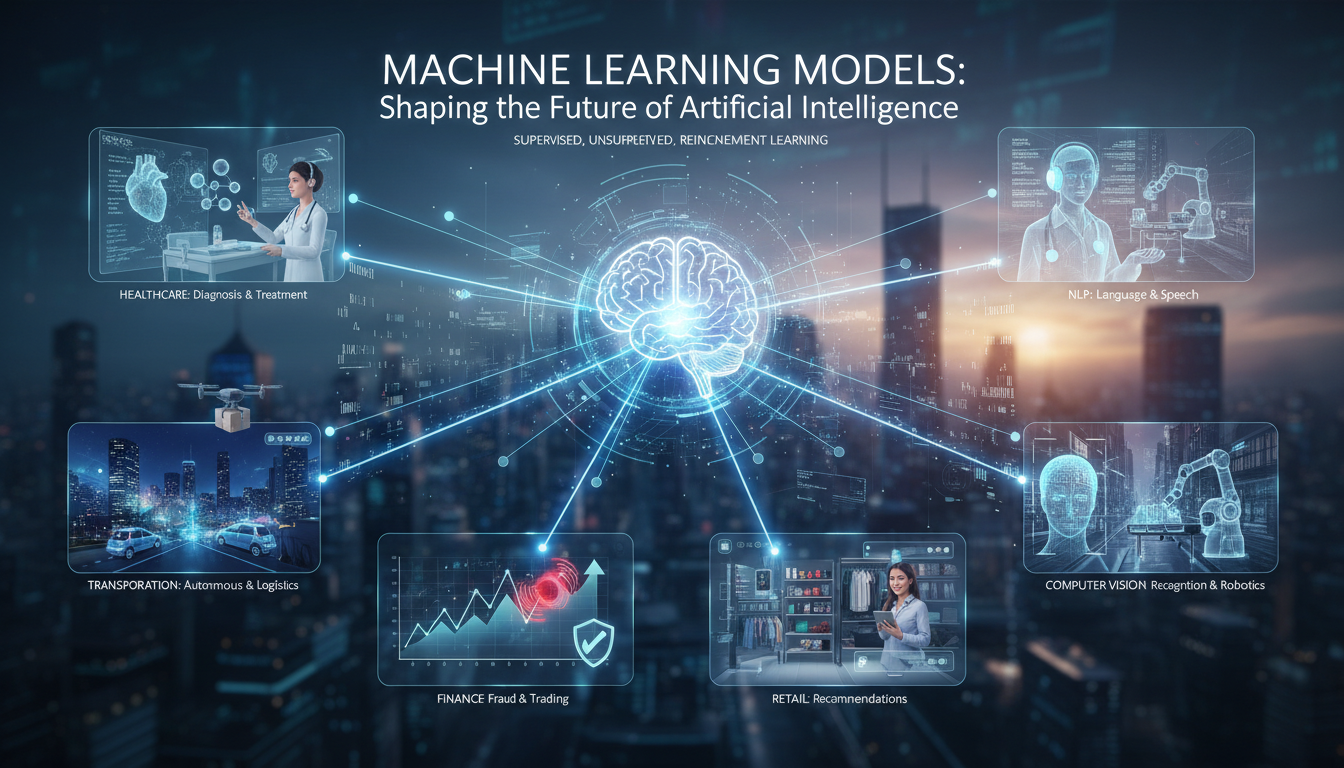

Key terminology includes “deep learning,” “supervised learning,” and “reinforcement learning”—each representing different techniques. In my experience working with ML systems, I’ve found that supervised learning relies on labeled data, reinforcement learning uses environmental feedback, and unsupervised learning discovers structure in unlabeled data through methods like clustering or dimensionality reduction.

Why They Matter

- They automate insights—allowing organizations to scale intelligence beyond human capacity.

- They personalize experiences—in retail, streaming, healthcare, and beyond.

- They enable new capabilities—autonomous vehicles, predictive maintenance, natural language understanding.

Beyond these applications, machine learning models serve as tools of experimentation—enabling researchers and engineers to test hypotheses, simulate scenarios, and push the boundaries of what intelligence can achieve.

Building Blocks: From Data to Trained Model

Building a machine learning model resembles constructing a house—data serves as the foundation, but algorithms, infrastructure, tuning strategies, and human judgment complete the structure. The process involves feature engineering and error analysis that require contextual understanding.

Step 1: Gathering and Preprocessing Data

Data preparation often consumes the most time in ML projects. Real-world data is typically incomplete, biased, and noisy. Consider a healthcare dataset with missing patient records or user logs with inconsistent formats. The cleaning process includes:

- Removing duplicates and erroneous records.

- Imputing missing values or excluding them when necessary.

- Normalizing or encoding features for numerical model input.

Preprocessing also involves feature extraction—converting raw text into embeddings or transforming images into relevant pixel features. In my experience, these steps frequently determine model success or failure.

Step 2: Choosing the Right Algorithm

Algorithm selection combines domain expertise with experimentation. Key considerations include:

- Data size and type.

- Prediction task—classification, regression, clustering, or reinforcement.

- Constraints like latency requirements or interpretability needs.

For tabular financial data, gradient boosting machines like XGBoost or LightGBM often perform well. Convolutional neural networks dominate image recognition, while transformer-based models like BERT or GPT variants excel at language tasks. Sometimes classical algorithms—SVMs, random forests—prove sufficient and more efficient.

Step 3: Training and Validation

Training optimizes parameters to minimize loss functions like cross-entropy or mean squared error. The process is iterative, involving epochs, batches, and hyperparameter tuning for learning rates, regularization, and architecture depth.

Validation prevents overfitting or underfitting through test set holdouts, cross-validation, or real-world dataset separation. In my observation, hyperparameter adjustments—like modifying learning rates or adding dropout—can yield disproportionate performance improvements.

Emerging Model Types: Where the Field Is Heading

The machine learning landscape evolves rapidly. Here are key developments shaping the industry:

Transformer Architectures and Large Language Models

Transformers have superseded recurrent neural networks and CNNs due to superior long-range dependency modeling. Models like ChatGPT, BERT, PaLM, and GPT variants share this architectural foundation. These systems trained on massive datasets adapt through fine-tuning or prompt engineering—a single architecture now fuels diverse applications from legal document review to medical note generation.

Self-Supervised and Unsupervised Learning

Labeling data is expensive and time-consuming. Self-supervised methods learn from unlabeled data using proxy tasks like masked language modeling or contrastive learning. Research from Google and Meta indicates these approaches reduce manual labeling requirements while scaling to large, uncurated datasets. Multimodal learning—combining image, audio, and text—is producing increasingly sophisticated representations.

Reinforcement Learning in Real-World Systems

While reinforcement learning originated in controlled environments like gaming and robotics, production applications are expanding. Dynamic recommender systems, data center resource allocation, and automated trading systems now leverage RL. Deployment remains challenging due to safety concerns and complexity, driving research into constrained RL with human oversight.

Hybrid Models: Combining Classical and Deep Learning

Not all problems require pure deep learning. Hybrid architectures combining rule-based logic with neural networks—or classical feature-based models with learned embeddings—often deliver superior performance and transparency. In regulated industries like healthcare and finance, hybrid approaches provide interpretability essential for compliance.

Real-World Example: Healthcare Diagnostics

Consider a hospital deploying ML for X-ray anomaly detection. The workflow typically involves:

- Aggregating thousands of labeled X-rays with tagged anomalies.

- Cleaning metadata and removing low-quality scans.

- Fine-tuning a CNN pretrained on ImageNet.

- Implementing explainability tools like Grad-CAM to highlight suspicious regions.

- Validating against held-out data and piloting with clinicians.

- Iteratively adjusting thresholds based on radiologist feedback.

Trust is essential—requiring transparency, ongoing evaluation, and human oversight. Models must adapt to new anomalies or population shifts over time.

Risks and Ethical Considerations

ML systems carry significant risks: algorithmic bias, privacy invasion, adversarial attacks, and overreliance on automated decisions. A credit scoring model trained on skewed data may systematically disadvantage protected groups.

Mitigation strategies include:

- Regular fairness audits using standardized benchmarks.

- Explainability tools like SHAP, LIME, and saliency maps.

- Diverse stakeholder involvement in model design.

- Continuous performance monitoring to detect drift.

These safeguards are essential for trustworthy AI deployment.

Organizational Readiness: Building Infrastructure and Culture

Scaling ML requires robust systems and cultural alignment:

- Data infrastructure—for collection, storage, and labeling.

- MLOps pipelines—for continuous integration, deployment, and monitoring.

- Governance frameworks—for risk management, auditing, and compliance.

- Talent—skilled practitioners, domain experts, and operational teams.

Organizations frequently struggle when focusing on prototypes without adequate deployment infrastructure. McKinsey research indicates that companies with mature ML operations achieve 2-3x greater business value from their models.

Trends to Watch: The Horizon Ahead

- TinyML—running optimized models on resource-constrained edge devices like sensors and wearables.

- Federated learning—training across decentralized data sources while preserving privacy.

- Explainable AI requirements—regulatory mandates increasingly demand algorithmic transparency.

- Energy-efficient training—growing environmental awareness driving innovation in sustainable computing.

- Multimodal AI systems—integrating vision, text, audio, and emerging sensor modalities.

Concluding Summary

Machine learning models drive AI’s expansion—from incremental improvements in recommendation systems to breakthroughs in autonomous vehicles and generative text. Building effective models requires balancing art and engineering, from data preparation through algorithm selection, tuning, validation, and deployment. Emerging architectures like transformers and self-supervised methods are reshaping capabilities, while healthcare diagnostics and other real-world applications demonstrate both promise and potential pitfalls.

Addressing risks like bias and opacity demands fairness audits, explainability tools, and governance frameworks. Scaling impact depends on infrastructure, operations, cross-functional collaboration, and organizational maturity. The frontier includes miniaturized models, privacy-preserving training, multimodal intelligence, and sustainable AI—all immediately relevant to practitioners today.

FAQs

What are the main types of machine learning models?

Machine learning models fall into supervised (using labeled data), unsupervised (discovering structure without labels), and reinforcement learning (learning via feedback in dynamic environments). Each suits different tasks—classification, clustering, or sequential decision-making.

Why is transformer architecture so popular now?

Transformers excel at capturing long-term dependencies in sequential data, making them ideal for language and multimodal tasks. Their scalability allows large models to be fine-tuned or prompted for diverse applications, as demonstrated by the rapid adoption of models like GPT-4 and Claude.

How do organizations ensure ML models are fair and transparent?

Best practices include fairness audits using standardized metrics, explainability tools (SHAP, LIME, saliency maps), diverse stakeholder reviews, and continuous monitoring for drift or emerging bias. Regulatory requirements increasingly mandate transparency in sensitive domains.

What infrastructure supports deploying ML models effectively?

Effective deployment requires robust data pipelines, MLOps workflows for continuous integration and monitoring, model versioning systems, and production performance tracking. Organizational culture and cross-team collaboration are equally critical for success.

What’s the role of self-supervised learning in future models?

Self-supervised learning reduces dependence on labeled data by training models on proxy tasks like predicting missing input components. This approach leverages vast unlabeled datasets efficiently, improving generalization across downstream applications.

Are there ethical concerns with machine learning models?

Significant ethical concerns include data privacy violations, algorithmic bias and discrimination, adversarial vulnerabilities, and overreliance on automated decisions. Mitigation requires technical safeguards, inclusive design practices, governance frameworks, and human oversight to maintain trust and safety.

Machine learning continues advancing rapidly, and staying informed about model architectures, deployment best practices, and ethical considerations is essential for anyone working with AI systems today.

February 11, 2026

February 11, 2026  8 Min

8 Min  No Comment

No Comment