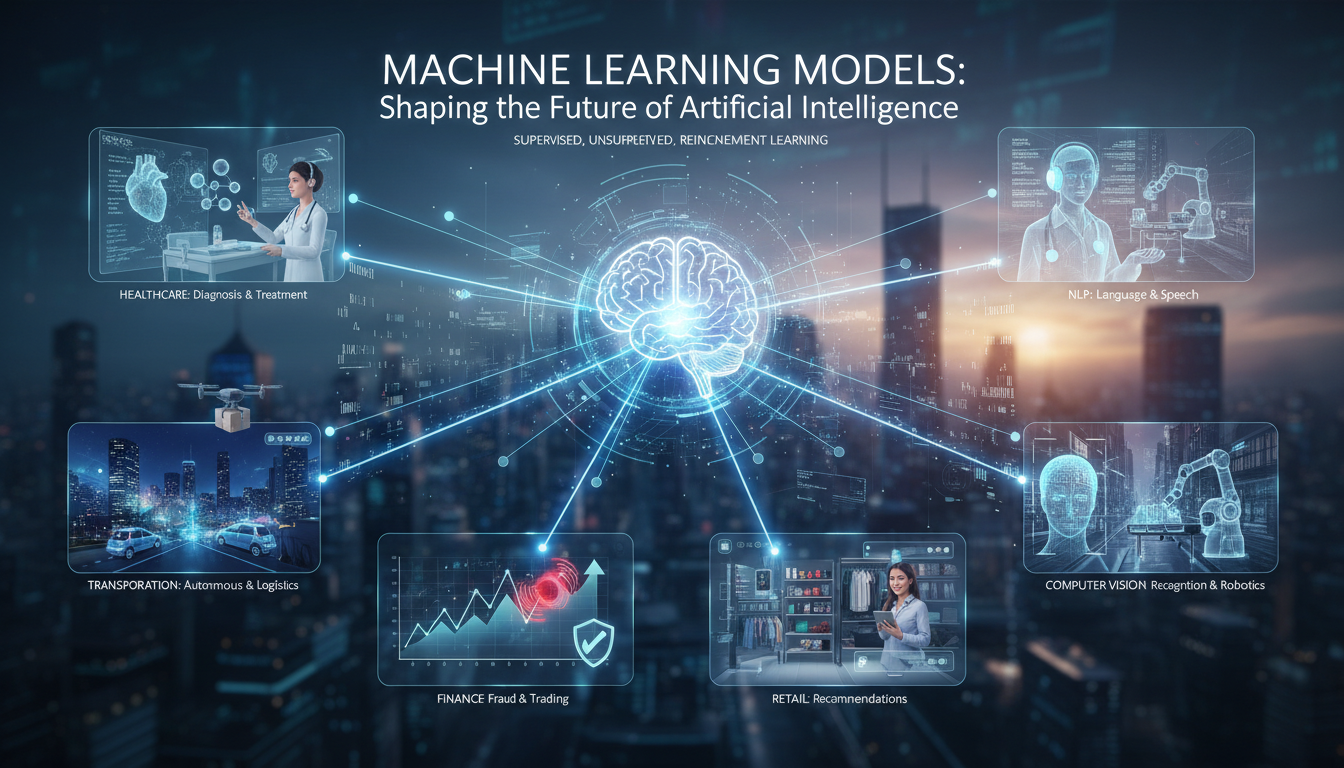

Machine Learning Models: Shaping the Future of Artificial Intelligence. That’s the idea, right? We all know AI is transforming industries—healthcare, finance, entertainment—but the engines beneath this are the diverse and ever-evolving machine learning models. This article dives into… well, sort of a messy but curious journey through how these models are built, why they matter, and what we might expect in, say, the not-so-distant future. There will be a bit of meandering thoughts, a few “oh, and also” moments, because yeah, that’s how human writing often flows. So bear with me.

The Core of Machine Learning Models: What They Are and Why They Matter

Machine learning models are, at their heart, mathematical constructs that detect patterns in data. They range from simple linear regression to complex neural networks. These models are not new—they’ve been around for decades—but the hunger for data and the advances in compute power have propelled them into the spotlight.

You’ll often hear phrases like “deep learning,” “supervised learning,” “reinforcement learning,” and each refers to a different technique or approach. For example, supervised learning relies on labeled data, while reinforcement learning depends on feedback from an environment. And then there’s unsupervised learning, where the model tries to find structure in unlabeled data—like clustering or dimensionality reduction. It sounds dry, but it’s the underpinnings of recommendation engines, anomaly detection, and more.

Why They Matter

- They automate insights—letting organizations scale intelligence beyond human capacity.

- They personalize experiences—in retail, streaming, healthcare, etc.

- They enable new capabilities—like autonomous vehicles, predictive maintenance, natural language understanding.

Beyond that, machine learning models are also tools of experimentation—letting researchers and engineers test hypotheses, simulate scenarios, and push the bounds of what intelligence can do. In many ways, these models are the scaffolding for future innovation.

Building Blocks: From Data to Trained Model

If building a house starts with bricks, building a machine learning model starts with data. But data alone isn’t enough—you need algorithms, infrastructure, tuning strategies, and a bit of that messy human judgment to do things like feature engineering and error analysis.

Step 1: Gathering and Preprocessing Data

This is often the most tedious part. Real-world data is messy—it’s incomplete, biased, noisy. Think of a healthcare dataset with missing entries, or user logs with inconsistent formats. So the first task is cleaning:

- Removing duplicates or obviously wrong records.

- Imputing missing values—or discarding them when necessary.

- Normalizing or encoding features, especially if the model expects numerical input.

Preprocessing can also include feature extraction—like turning raw text into embeddings, or transforming images into relevant pixel features. These steps can make or break model performance. A human-centric, rather than purely algorithmic, process often works best: “Oh, I see these values are skewed—maybe I log-transform them,” which brings us to the next step.

Step 2: Choosing the Right Algorithm

Here’s where domain knowledge meets experimentation. Choosing algorithms involves considering:

- The size and type of data.

- The prediction task—classification, regression, clustering, reinforcement.

- Constraints—like latency for real-time applications, or interpretability for regulatory contexts.

So, for tabular data in finance, you might try gradient boosting machines like XGBoost or LightGBM. For image recognition, convolutional neural networks are popular. For language, transformer-based models like BERT or GPT variants dominate. Sometimes, classical algorithms—SVMs, random forests—are sufficient and more efficient. It’s not single-minded; it depends.

Step 3: Training and Validation

Once your data and algorithm are aligned, training begins. This involves optimizing parameters (weights, biases, etc.) to minimize a loss function—like cross-entropy or mean squared error. Training is iterative: epochs, batches, tuning hyperparameters such as learning rate, regularization, architecture depth.

Validation is crucial—to catch overfitting or underfitting. That means holding out a test set, using cross-validation, or even setting aside a real-world dataset for later “final exam.” It’s an interplay: tweaking hyperparameters, retraining, validating again.

In practice, tuning is part of the art. Engineers often say, “Well, it was close, but I changed the learning rate, added dropout, and suddenly it worked much better.” Sometimes progress is incremental; sometimes a tuning choice yields surprisingly big gains.

“A tiny adjustment in hyperparameters can catalyze disproportionate performance gains—sometimes you don’t realize it until you test.”

That quote (kind of a paraphrase of common wisdom) encapsulates how subtle shifts can have big effects.

Emerging Model Types: Where the Field Is Heading

The landscape isn’t static. Here are some developments shaping the near future of machine learning models:

Transformer Architectures and Large Language Models

Once upon a time, recurrent neural networks and CNNs dominated. Now, transformers rule—largely because of their ability to model long-range dependencies effectively. ChatGPT, BERT, PaLM, GPT variants—they all share this transformer core.

These models are trained on massive datasets and adapted through fine-tuning or prompt engineering. For instance, a general-purpose LLM can be fine-tuned for legal document review or medical note generation. It’s wild—to think a single architecture fuels so many applications.

Self-Supervised and Unsupervised Learning

Labels are expensive. That’s why self-supervised methods, which learn from unlabeled data with proxy tasks, are gaining traction. Examples include masked language modeling (predicting missing tokens) or contrastive learning in vision (learning representations by distinguishing similar and dissimilar pairs).

These approaches reduce reliance on manual labeling and scale better to large, uncurated datasets. And they’re getting more creative… like combining modalities (image+audio+text) to learn richer representations.

Reinforcement Learning in Real-World Systems

Reinforcement learning (RL) has long been playground for simulations—games, robotics. But more categories are entering production: recommender systems that adapt policies dynamically, resource allocation engines in data centers, even automated trading systems.

Still, RL in the real world is niche—because of risk, complexity, safety concerns. Yet researchers are exploring ways to deploy safe RL with constraints and human oversight.

Hybrid Models: Bringing Classical and Deep Learning Together

Not all problems require pure deep learning. A hybrid that combines rule-based logic with neural nets or classical feature-based models with learned embeddings can perform better or more transparently. In highly-regulated fields like finance or healthcare, hybrid models help provide interpretability and compliance.

Real-World Example: Healthcare Diagnostics

Let’s make this concrete: imagine a hospital deploying an ML model to detect anomalies in X-rays. The process might look like:

- Aggregating thousands of labeled X-rays (with anomalies tagged).

- Cleaning metadata and removing low-quality scans.

- Using a CNN architecture pretrained on ImageNet, then fine-tuning it.

- Adding explainability tools—like Grad-CAM—to highlight suspicious regions.

- Validating against a held-out set and piloting with clinicians.

- Iteratively tuning thresholds and incorporating feedback from radiologists.

This kind of model is useful—but trust is essential. That means transparency, ongoing evaluation, and human oversight. Over time, the model adapts to new anomalies or population shifts—reflecting how machine learning systems evolve in real deployments.

Risks and Ethical Considerations

It’s not all upside. Risks include bias, privacy invasion, adversarial attacks, and overreliance on automated decisions. For instance, a credit scoring model might systematically disadvantage certain groups if training data is skewed.

To mitigate risks:

- Implement fairness audits.

- Use explainability tools—like SHAP, LIME, or saliency maps.

- Involve diverse stakeholders in model design.

- Monitor performance over time to detect drift.

These steps aren’t optional; they’re essential for trustworthy AI.

Organizational Readiness: Building Infrastructure and Culture

Scaling ML requires more than good models; it needs systems and culture.

- Data infrastructure—for collection, storage, labeling.

- MLOps pipelines—for continuous integration, deployment, monitoring.

- Governance frameworks—for risk, auditing, and compliance.

- Talent—skilled practitioners, domain experts, and operational teams.

Companies often struggle because they focus on prototypes but neglect deployment operationalization. Real impact comes from deploying models consistently, safely, and adaptably.

Trends to Watch: The Horizon Ahead

- TinyML—running models on edge devices like sensors and wearables.

- Federated learning—training across decentralized data sources, helpful in privacy-sensitive domains.

- Explainable AI becoming mandatory—regulators and users demand transparency.

- Energy-efficient training—as awareness grows around climate impact, greener training methods matter.

- Multimodal AI systems—combining vision, text, audio, maybe even smell sensors someday (OK, speculative—but hearing scientists is exploring odor-detecting sensors).

These aren’t wild fantasies; many are underway across research labs and startups.

Concluding Summary

Machine learning models are the engines driving AI’s forward march—from logistic tweaks in recommendation systems to bold steps like autonomous vehicles or text generation. Building them is part art, part engineering—starting from data, moving through algorithm selection, tuning, validation, deployment. Emerging architectures like transformers and self-supervised methods are reshaping the terrain, while real-world deployments—like diagnostic tools—illustrate both promise and pitfalls.

Risks like bias and opacity must be addressed with fairness audits, explainability, and governance. Meanwhile, scaling impact hinges on infrastructure, operations, cross-functional collaboration, and organizational maturity. Looking ahead, miniaturized models, privacy-preserving training, multimodal intelligence, and sustainable AI represent a frontier that’s immediately relevant.

Finally, though there’s unpredictability—some breakthroughs come from serendipity or messy human trials—the structured process, institutional discipline, and reflective oversight will distinguish models that merely perform from those that responsibly transform.

FAQs

What are the main types of machine learning models?

Machine learning models generally fall into supervised (labeled data), unsupervised (discovering structure without labels), and reinforcement learning (learning via feedback in dynamic environments). Each type is suited to different tasks—like classification, clustering, or sequential decision-making.

Why is transformer architecture so popular now?

Transformers excel at capturing long-term dependencies in sequential data, making them ideal for language and multimodal tasks. Their scalability and flexibility allow large models (LLMs) to be fine-tuned or prompted for a wide range of applications.

How do organizations ensure ML models are fair and transparent?

Common approaches include fairness audits, explainability tools (e.g., SHAP, LIME, saliency maps), stakeholder reviews, and continuous monitoring to detect drift or bias. Regulatory pressures are also pushing for transparency, especially in sensitive domains.

What infrastructure supports deploying ML models effectively?

Effective deployment involves robust data pipelines, MLOps workflows for continuous integration and monitoring, model versioning tools, and performance tracking in production. Organizational culture and cross-team collaboration are equally important.

What’s the role of self-supervised learning in future models?

Self-supervised learning reduces reliance on labeled data by training models using proxy tasks—like predicting missing parts in inputs. This allows models to leverage vast unlabeled datasets efficiently and generalize better to downstream tasks.

Are there ethical concerns with machine learning models?

Yes—ethical concerns include data privacy, bias, discrimination, adversarial vulnerabilities, and overreliance on automated decisions. Mitigation requires technical controls, inclusive design, governance, and human oversight to maintain trust and safety.

That’s about it—maybe you noticed the occasional “and then,” or “so,” reflecting how thoughts spiral. But at least the nuts and bolts of machine learning models are front and center.

February 11, 2026

February 11, 2026  9 Min

9 Min  No Comment

No Comment