Interactive eLearning has transformed how organizations train employees, educate students, and develop skills at scale. Unlike passive content consumption, interactive learning engages learners through decision-making, feedback loops, and participatory elements that dramatically improve retention and application. Research from the National Training Laboratory shows that interactive learning methods can boost retention rates to 75% compared to just 10% for passive reading. This article explores proven interactive eLearning techniques, real-world examples that drive measurable outcomes, and strategies you can implement immediately to create more engaging learning experiences.

What Makes eLearning Truly Interactive

Interactive eLearning goes beyond clicking “next” through slide decks. True interactivity occurs when learners actively participate in the learning process, making choices, solving problems, and receiving immediate feedback that shapes their understanding. The distinction between passive and interactive learning lies in the learner’s cognitive engagement level.

Passive eLearning includes watching videos without checkpoints, reading text without activities, and completing multiple-choice quizzes that simply confirm recall rather than build comprehension. Interactive eLearning, by contrast, requires learners to apply concepts, navigate scenarios, and construct knowledge through experience. The eLearning Industry reports that 83% of learners prefer interactive courses over static presentations, yet only 12% of corporate training programs fully leverage interactive elements.

The cognitive science behind this preference is well-established. Dual coding theory demonstrates that combining visual and kinesthetic learning pathways creates stronger neural connections than single-channel learning. When learners not only read about a process but simulate performing it, they develop procedural memory that transfers more effectively to real-world tasks. Branching scenarios, for instance, activate problem-solving circuits that passive content simply cannot reach.

Modern interactive eLearning platforms leverage adaptive algorithms to personalize difficulty levels based on learner performance, creating individualized learning paths that maintain optimal challenge levels. This personalization distinguishes sophisticated interactive design from basic multimedia presentations, ensuring each learner receives instruction calibrated to their current competency.

Scenario-Based Learning: Decisions That Drive Results

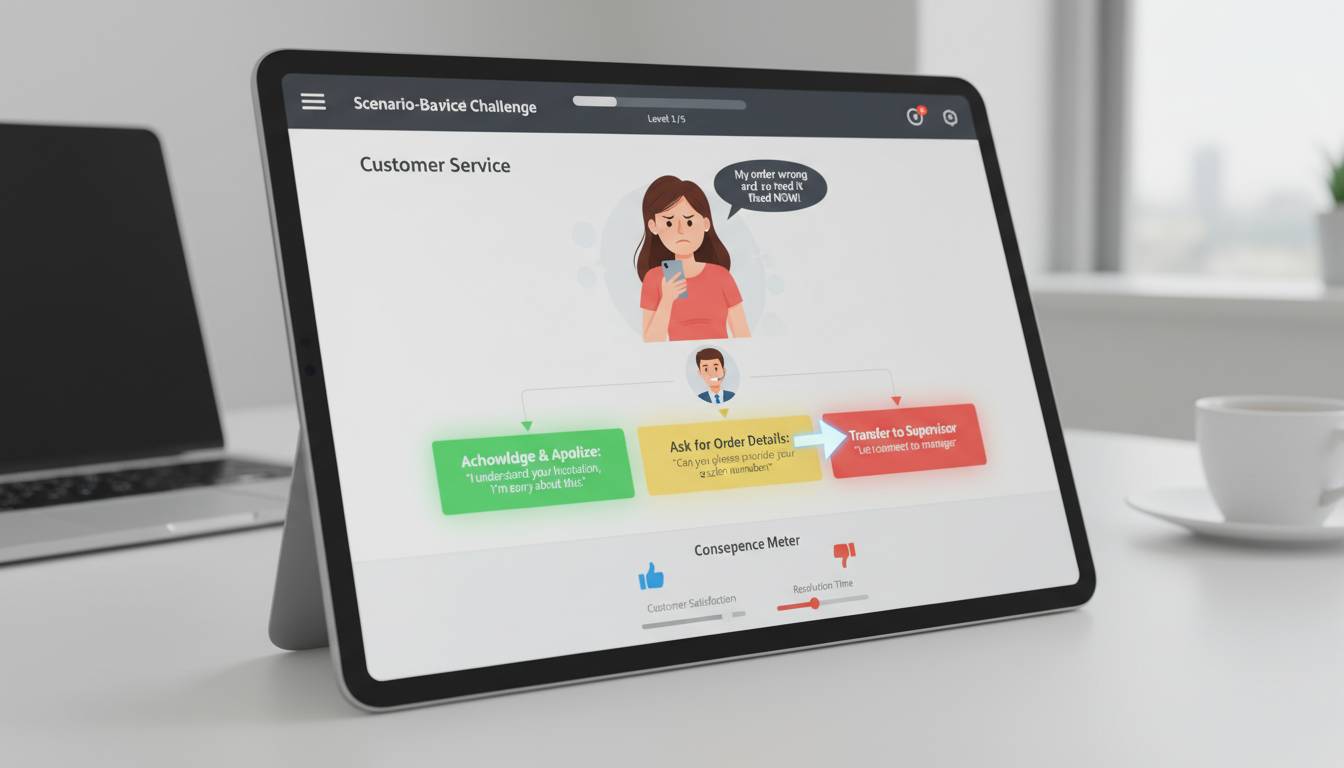

Scenario-based learning presents learners with realistic situations requiring application of knowledge and decision-making skills. Unlike case studies that describe past events, scenarios immerse learners as active participants facing consequences for their choices. This technique proves particularly effective for soft skills training, compliance education, and leadership development.

A Fortune 500 technology company implemented scenario-based cybersecurity training where employees navigated simulated phishing attacks, data breach responses, and social engineering attempts. The interactive module tracked not just correct answers but decision-making speed and reasoning patterns. Post-implementation data revealed a 72% reduction in successful phishing attempts among employees who completed the scenario training compared to those who only watched informational videos.

The effectiveness of scenarios stems from emotional engagement. When learners face realistic consequences—losing a simulated sale, failing to identify a compliance violation, or damaging a client relationship—the stakes feel genuine. This emotional investment activates the amygdala, strengthening memory consolidation. A study published in the Journal of Educational Psychology found that scenario-based learning produced 31% better transfer of skills to job performance compared to traditional instruction.

Designing Effective Scenarios

Strong scenarios include multiple decision points, realistic complexity, and meaningful consequences. Avoid artificial dilemmas with obvious “right answers” that experienced learners will immediately recognize. Instead, design scenarios that present genuine trade-offs where reasonable professionals might disagree.

Branch complexity matters significantly. Simple branching offers 2-3 paths with clear right/wrong outcomes. Advanced scenarios incorporate ambiguous situations requiring synthesis of multiple concepts, time pressure模拟, and cumulative consequences where earlier decisions impact later options. The goal is not to test memorization but to develop judgment.

Feedback within scenarios should explain why outcomes occurred, not simply announce success or failure. When a learner makes a suboptimal decision, immediate explanation of the reasoning behind better choices transforms the mistake into a learning moment. This feedback immediacy distinguishes interactive scenarios from traditional examinations where feedback arrives days or weeks later.

Gamification Elements That Increase Completion Rates

Gamification applies game design elements to non-game contexts, leveraging motivational mechanics that humans naturally respond to. Points, badges, leaderboards, challenges, and narrative progression create psychological engagement that sustains motivation through lengthy learning journeys. The TalentLMS Gamification at Work Survey found that 78% of employees feel gamification makes them more productive, and 87% say it improves their learning experience.

Points systems provide immediate feedback on progress, creating a sense of accumulation that motivates continued engagement. Effective point systems award not just completion but quality—bonus points for speed, accuracy, or returning to review difficult sections. This encourages deep engagement rather than mere participation.

Badges recognize specific achievements, creating tangible milestones within the learning journey. Research from the University of Colorado found that badge systems increased course completion rates by 34% when badges represented meaningful skill acquisitions rather than arbitrary participation markers. Design badges that signify genuine competency: “Compliance Expert” carries more weight than “Module 3 Completer.”

Leaderboards introduce social comparison that drives competitive learners while potentially discouraging others. Implement optional leaderboards that learners can choose to display, or create team-based competitions that emphasize collaborative achievement over individual ranking. The key is understanding your audience—some organizations thrive on public competition while others require more private progress tracking.

Narrative and Progression Systems

Beyond points and badges, narrative structures create intrinsic motivation through story engagement. Learning pathways that unfold as chapters in an unfolding scenario leverage humans’ innate appetite for narrative resolution. Learners return to discover what happens next, transforming mandatory training into anticipated content consumption.

Progression systems unlock content sequentially, creating a sense of advancement through visible progress bars, level-ups, or unlocked capabilities. This mechanic works because of the endowment effect—people value progress they’ve made more than equivalent unearned advantages. Learners who’ve “earned” access to advanced modules feel ownership of that achievement.

A healthcare network implemented gamified compliance training featuring a hospital simulation where learners played as new administrators managing facility operations. Progression unlocked new departments, each introducing compliance scenarios relevant to that area. Completion rates jumped from 61% to 94% compared to the previous text-based compliance course, and six-month retention tests showed 47% higher scores among gamified learners.

Simulation and Virtual Reality in Professional Training

Simulations replicate real-world processes with enough fidelity to develop procedural skills without real-world consequences. From flight simulators that have trained pilots for decades to virtual manufacturing equipment that lets maintenance technicians practice repairs, simulation-based training transfers effectively to job performance.

The fidelity question matters more than many designers assume. Low-fidelity simulations using simple clickable interfaces work well for declarative knowledge—memorizing steps in a process, identifying components, understanding workflows. High-fidelity simulations incorporating realistic interfaces, time pressure, and complex variables prove superior for procedural skills requiring muscle memory and rapid decision-making.

Virtual reality (VR) represents the extreme end of simulation fidelity. VR training has shown remarkable results in high-stakes industries. Walmart implemented VR training for customer service scenarios, disaster response, and equipment operation, reporting that employees trained in VR performed 10% better in live exercises than those trained through traditional methods. The immersive nature of VR activates spatial memory and creates stronger environmental associations that transfer to physical spaces.

When Simulations Deliver ROI

Simulation investment makes sense when several conditions align: the skill involves procedural knowledge that requires practice, errors in real performance carry significant costs, the trained population is large enough to amortize development expenses, and the skill degrades without periodic practice. Medical procedures, equipment operation, emergency response, and sales conversations all meet these criteria.

A nuclear power company developed full-scope simulations for reactor operator training, replicating control room interfaces with complete accuracy. Operators could practice startup sequences, abnormal procedure responses, and emergency shutdowns thousands of times without any safety risk. Analysis showed that operators maintained certification pass rates 23% higher with simulation-based training, and incident response times improved by 31% during drills.

The limitation of simulations is development cost. Building accurate simulations requires subject matter expert input, technical development, and extensive testing. However, advances in authoring tools and VR platforms have dramatically reduced these costs, making simulation-based training accessible for mid-sized organizations rather than just enterprise deployments.

Adaptive Learning and Personalized Pathways

Adaptive learning systems adjust content delivery based on individual learner performance, creating personalized experiences that optimize efficiency. When a learner demonstrates mastery of foundational concepts, the system accelerates past review material. When struggle appears, the system provides additional scaffolding, alternative explanations, or prerequisite remediation.

The fundamental principle is zone of proximal development—learning is most effective when instruction targets slightly beyond current competency. Too easy feels patronizing; too difficult creates frustration and disengagement. Adaptive systems continuously recalibrate to maintain learners in this productive challenge zone.

Implementation ranges from simple rule-based systems to sophisticated AI-driven platforms. Basic adaptive learning uses decision trees: if quiz score falls below threshold, present remediation module; if score exceeds threshold, skip to advanced content. AI-driven systems analyze patterns across thousands of learners to predict which content pieces will most efficiently close individual knowledge gaps.

A professional certification organization implemented adaptive learning for accounting exam preparation. The system tracked response patterns, time spent on concepts, and error types to build detailed learner profiles. Results showed average study time reduced by 40% while first-attempt pass rates increased by 18%. Learners reported feeling more confident because the system ensured they addressed genuine weaknesses rather than spending time on already-mastered material.

Building Adaptive Sequences

Effective adaptive design requires robust content libraries with multiple explanations for key concepts. One learner’s confusion might respond to a visual diagram while another needs a concrete example. Building these variant pathways demands upfront investment but determines adaptive system effectiveness.

Analytics dashboards help instructors understand where learners struggle and which content variants perform best. This feedback loop enables continuous improvement—the system learns from aggregate learner data which approaches work, and designers refine content based on performance patterns. Organizations implementing adaptive learning should plan for iterative refinement rather than assuming initial deployment will be optimal.

Interactive Assessment Beyond Multiple Choice

Traditional assessments often measure recognition rather than genuine competency. Interactive assessment techniques evaluate applied knowledge through scenarios, performance tasks, and constructed responses that require genuine understanding.

Scenario-based assessment embeds evaluation within realistic contexts. Rather than asking “What should you do when you discover a data breach?”—which learners can answer by recognizing keywords—the assessment presents an actual discovery situation and evaluates the learner’s response sequence. This approach measures judgment, not just recall.

Performance tasks simulate job activities directly. A leadership training program might assess through a simulated team meeting where the learner must respond to employee concerns, allocate resources, and manage conflict. Automated systems evaluate response quality, prioritize information appropriately, and maintain professional communication. Such assessments predict job performance far better than knowledge tests.

Constructed response questions require learners to explain reasoning, not just select options. When learners must articulate why a particular approach makes sense or analyze trade-offs in a complex situation, they demonstrate deeper processing that better prepares them for real-world problem-solving.

Implementing Effective Interactive Assessments

Design assessments that mirror real job challenges as closely as possible. Identify the most critical decisions learners will face in applying training, then create assessment scenarios requiring those decisions. Feedback should explain correct reasoning, transforming assessment from a judgment into a final learning opportunity.

Formative assessment—ongoing checks throughout learning rather than only end-of-course testing—improves both learning and evaluation. Embedded knowledge checks, reflection prompts, and practice activities provide data about learner progress while reinforcing key concepts. Research from Carnegie Mellon shows that frequent low-stakes testing improves long-term retention more than equivalent study time without testing.

Common Interactive eLearning Mistakes to Avoid

Many organizations invest in interactive elements without achieving corresponding learning outcomes. Understanding common pitfalls helps designers create genuinely effective experiences rather than superficially engaging but educationally hollow content.

Over-emphasis on entertainment over learning. Interactive elements should serve educational objectives, not merely amuse. A spectacularly fun game that doesn’t actually develop target skills wastes learner time and organization resources. Evaluate every interactive element against the question: does this help learners perform better on the job? If not, cut it regardless of how engaging it might be.

False interactivity. Click-to-continue interfaces, mandatory clicks that don’t change content, and “interactive” elements that simply reveal additional text frustrate learners who recognize they’re being manipulated into perceived engagement. True interactivity requires meaningful agency—choices that genuinely affect outcomes, feedback that responds to learner input, content that adapts to demonstrated needs.

Ignoring mobile contexts. Many learners complete training on mobile devices, yet interactive designs optimized for desktop fail on smaller screens. Touch interactions, reduced screen real estate, and intermittent connectivity all require thoughtful design adaptation. Test interactive modules on actual mobile devices used by your learner population.

Insufficient learner support. Interactive modules sometimes assume learners understand interfaces or navigation when they don’t. Include clear instructions, accessible help functions, and logical information architecture. A brilliant interactive scenario fails if learners can’t figure out how to begin.

Implementation Strategy and Getting Started

Implementing effective interactive eLearning requires balancing ambition with practical constraints. Start with high-impact, lower-complexity techniques before attempting ambitious simulations or adaptive systems.

Begin with scenario-based branching for your highest-priority training topics. These require authoring tools that are increasingly accessible and provide strong ROI for compliance, customer service, and leadership training. Build internal capability with these projects before attempting advanced technologies.

Invest in quality over quantity. A single excellently designed interactive module outperforms five mediocre ones. Learner skepticism toward “mandatory training” means your first interactive project establishes reputation. Choose scope carefully—it’s better to do one thing brilliantly than several things adequately.

Measure effectiveness rigorously. Define success metrics before launch: completion rates, assessment scores, time-to-competency, on-the-job performance changes, or reduced error rates. Compare results against previous training approaches to demonstrate ROI and identify improvement opportunities.

Frequently Asked Questions

What is the most effective interactive eLearning technique?

Scenario-based learning consistently delivers the strongest results across industries and skill types. By placing learners in realistic situations requiring decisions and facing consequences, scenario-based training activates problem-solving processes that transfer effectively to real-world performance. Research shows 75% retention rates compared to 10% for passive learning methods.

How much does interactive eLearning development cost?

Costs vary dramatically based on complexity. Basic interactive modules using branching scenarios and simple feedback can cost $3,000-$10,000 for a 30-minute course. Advanced simulations, virtual reality experiences, and adaptive learning systems can range from $25,000 to $500,000 or more for enterprise deployments. However, ROI typically justifies investment through improved performance and reduced training time.

How long does it take to create interactive eLearning?

Development timelines depend on content complexity, interactivity level, and available resources. A basic interactive module might take 4-8 weeks with experienced developers. Complex simulations or VR experiences often require 3-6 months. Most organizations can deploy initial interactive projects within 8-12 weeks when starting with established authoring tools and clear learning objectives.

What tools are best for creating interactive eLearning?

Popular authoring platforms include Articulate Rise 360 (user-friendly, robust features), Adobe Captivate (powerful simulation capabilities), and Vyond (animation-focused). For organizations building extensive custom content, learning experience platforms like TalentLMS or Docebo offer integrated gamification, adaptive learning, and analytics. Choose tools based on your team’s technical skills, budget, and specific interactive requirements.

How do you measure the ROI of interactive eLearning?

Establish baseline metrics before implementation—current completion rates, assessment scores, time-to-competency, error rates, or performance ratings. After deploying interactive training, measure the same metrics and calculate improvements. Include hard metrics like reduced training time, decreased support tickets, improved sales figures, or lower error rates alongside softer measures like learner satisfaction and engagement scores.

March 20, 2026

March 20, 2026  14 Min

14 Min  No Comment

No Comment