Most eLearning content fails learners. They complete it because they must, not because they find it valuable. They skim slides, guess on quizzes, and retain almost nothing within days. I’ve witnessed this pattern repeatedly—as someone who’s sat through countless hours of mandatory corporate training and as an instructional designer who once created content I knew wasn’t working.

The reality is that engaging eLearning follows predictable principles. It’s not a creative accident—it’s a deliberate process built on solid learning science. This guide walks you through that process step by step.

What Makes eLearning Content Engaging?

The core principle: learners retain information through active participation, not passive reception. Research from the RAND Corporation found that interactive learning approaches improve retention by 50-60% compared to lecture-based formats in corporate training settings (Sitzmann, 2011, Journal of Educational Psychology). This isn’t surprising when you consider cognitive science—we remember what we actively process.

Engagement combines several elements working together: relevance to the learner’s actual job, clear organization so they know where they are, interactive elements requiring mental participation, immediate feedback, and visual design that supports rather than exhausts them.

Without these components, even the most researched content loses learners. Modern learners bring expectations from consumer digital experiences—they want personalization, interactivity, and control over pace. Content ignoring these expectations feels dated, regardless of learning objective quality.

Step 1: Analyze Your Audience

Most projects skip this step, and it damages them. In my experience designing training across industries, the difference between effective and ineffective content often traces back to audience analysis depth.

Start with demographics. What’s the average age? How tech-savvy are they? What industries and job roles do they hold? Understanding the context where learners apply knowledge helps maintain relevance throughout.

Demographics only get you so far. You also need psychographics. Why are they taking this course? Do they want to learn, or is this mandatory compliance training they’re dreading? The answer changes everything—what examples land, what motivates completion, how you frame content.

Gather this through surveys, interviews with subject matter experts, analysis of past programs, and performance data from previous training. This research phase takes time but saves much more later.

Step 2: Define Clear Learning Objectives

Learning objectives form your foundation. Without them, you can’t measure whether your eLearning actually works. These statements describe what learners will do after completing training—specific enough to guide content creation, measurable for evaluation.

Follow the SMART framework: Specific, Measurable, Achievable, Relevant, and Time-bound. “Understand customer service principles” is useless. “Resolve customer complaints within 24 hours using the company’s three-step escalation protocol”—that’s something you can teach and test.

Consider Bloom’s taxonomy levels. Are you teaching basic knowledge, or do learners need to apply, analyze, or evaluate? Higher-order objectives need different activities and assessments. Research published in Educational Technology Research and Development indicates that misaligned assessments—testing recall when objectives require application—reduce learning transfer by up to 40% (Kulik et al., 2008).

Group objectives logically. Three to five major objectives per module, each with two to four sub-objectives. This hierarchy informs navigation and structure.

Step 3: Choose the Right Content Format

Format matters significantly. Different topics, audiences, and objectives call for different approaches. Forcing content into wrong formats creates friction that kills engagement.

Scenario-based learning works well for complex topics requiring decision-making skills. Instead of abstract rules, you place learners in realistic situations where they apply knowledge to solve problems. Research indicates scenario-based learning can achieve 93% engagement rates in technical training (Clark & Mayer, 2016, E-Learning and the Science of Instruction). Effective for compliance training, soft skills, troubleshooting.

Microlearning breaks content into small chunks—five to ten minutes. This fits busy schedules. According to the Association for Talent Development, microlearning can increase retention by 20-50% compared to traditional formats. Best for just-in-time learning, reinforcing previous material, or topics that segment easily.

Gamification adds points, badges, leaderboards. Research published in Computers & Education found gamification can improve performance by 14-48% (Dicheva et al., 2015). Not for every subject, but it boosts motivation for voluntary training, especially with younger audiences or when building learning habits.

Video continues growing because it’s familiar and accessible. But passive video is still passive. Add quizzes, branching choices, or reflection prompts. Conversational tone and regular knowledge checks matter more than production polish.

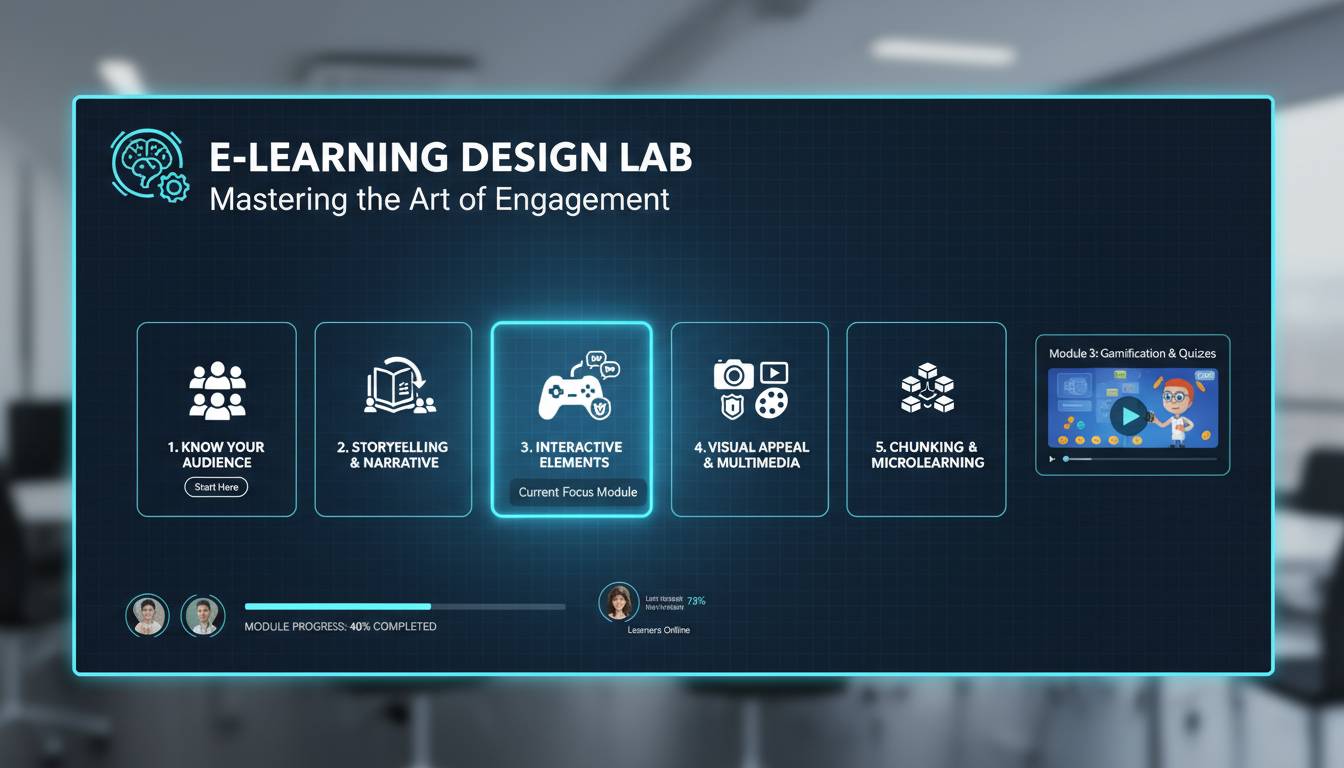

Step 4: Create Interactive Elements

Interactivity transforms content from digital pages into actual learning experiences. The key is meaningful participation—learners need to think, respond, and decide.

Branching scenarios give learners decision points where their choices change what happens next. Perfect for teaching decision-making because learners experience consequences without real risk. A customer service course might branch based on how the learner responds to an angry customer, with different paths leading to different outcomes.

Drag-and-drop activities work for matching, categorization, or sequencing. They engage motor memory and provide immediate visual feedback. Common uses: pairing terms with definitions, ordering process steps, categorizing examples.

Hotspots and click-to-reveal let learners explore visuals to find information. Good for product training, safety procedures, familiarizing learners with objects or environments. Clicking creates exploration and engagement.

Simulations recreate real-world processes in a controlled digital environment. Range from simple walk-throughs to complex immersive experiences mimicking actual job tasks. High-fidelity simulations require significant development investment but pay off when real-world mistakes are costly—medical, aviation, industrial safety.

Step 5: Design for Visual Appeal

Visual design affects perceived quality and learner confidence, but more importantly, it impacts cognitive load and learning. Good design isn’t about flashy graphics—it’s about organizing information to support learning.

White space gives content room to breathe and helps learners focus. Crowded screens with too much text and graphics overwhelm working memory and cause faster fatigue. Break content across multiple screens with generous spacing—even when covering substantial material.

Color theory matters beyond decoration. Consistent color coding helps learners recognize content types, navigation, interactive elements. Contrast ratios affect readability, especially for visual impairments. A limited color palette creates professional coherence.

Typography influences readability and perceived difficulty. Clean fonts at proper sizes ensure comfortable consumption across devices. Consistent heading styles help learners scan and navigate. Varying weights and sizes creates visual hierarchy that guides attention.

Images should serve a purpose, not just fill space. Relevant visuals reinforce concepts, provide context, break up text. Abstract decorative images add clutter without learning value. Ask: does this visual support the objective?

Step 6: Incorporate Multimedia

Cognitive scientists like Richard Mayer researched how people process multimedia. The core finding: learners process visual and auditory information through separate channels. Overload either channel, and learning suffers. Their research demonstrates that well-designed multimedia instruction can improve learning by up to 50% compared to text-only instruction (Mayer & Moreno, 2003).

Video excels at demonstrating processes, procedures, human interactions—things hard to convey in text or static images. Clear and relevant beats polished. A rough video that clearly shows a skill outperforms a polished one with unnecessary fluff.

Audio—narration and sound effects—adds dimension but needs restraint. Narration guides learners through complex visuals. Sound effects can reinforce feedback or create atmosphere. But auto-playing audio frustrates people, so always provide controls and use audio only when it adds real value.

Animation brings static concepts to life, illustrates processes hard to capture otherwise. Keep animations focused on the learning objective—gratuitous motion creates distraction. Complex animations also risk alienating learners on slower connections or older devices.

The most engaging multimedia is interactive—it requires participation rather than passive consumption. The best eLearning thoughtfully combines media types to create varied experiences that maintain interest and support different learning preferences.

Step 7: Build in Feedback Mechanisms

Feedback turns learning from one-way transmission into a responsive dialogue. Without it, learners can’t tell if they’re on track, and misunderstandings become entrenched. Research shows immediate feedback can increase correct response rates by 10-15% (Kulik & Fletcher, 2016, Review of Educational Research). Effective feedback is immediate, specific, and constructive.

Correct answer feedback explains why the answer is right, reinforcing the concept. Don’t just mark it correct—help learners connect their response to the underlying principle. When someone correctly identifies the first step in a troubleshooting procedure, explain why that step comes first and what follows from getting it right.

Incorrect answer feedback might be more important—it’s a learning opportunity. Instead of just saying wrong, explain the likely misconception and guide toward the correct answer. Help learners understand their error, not just avoid it next time.

Progress indicators show learners where they are and how much remains. This transparency reduces anxiety and helps them decide whether to continue or save for later. Percentages, completed items, remaining modules, or time estimates—pick what fits your content.

Social learning features—discussion boards, peer reviews, collaborative projects—add feedback through learner interaction. Research from the National Training Laboratory indicates that learning retention reaches 75% when taught to others, compared to 50% through practice alone. Great for soft skills, exploring perspectives, building networks. But they need facilitation to stay productive.

Step 8: Test and Iterate

Even carefully designed content benefits from real-world testing. What works in theory doesn’t always work when actual learners engage with it. Building evaluation into development catches problems before they impact everyone.

Pilot testing with a small representative group gives insights you can’t see as a content creator. They find confusion points you missed, technical issues you didn’t anticipate, and provide pacing and engagement feedback. Plan for at least one round before full deployment.

Learning analytics reveal how learners actually interact with your content at scale. Watch completion rates, time on different sections, assessment scores, learner feedback. Low completion might mean pacing issues or irrelevant content. Consistently difficult questions might mean objectives weren’t addressed. Analytics transform assumptions into evidence.

Continuous improvement should be ongoing, not one-time. Establish a schedule for reviewing and updating based on learner feedback, performance data, and subject matter changes. Outdated content damages credibility and may contain incorrect information. Regular maintenance matters for quality and engagement.

Common Mistakes to Avoid

Knowing what not to do matters as much as best practices.

Overloading content is the most frequent error. The desire to be comprehensive leads to information dumps that overwhelm learners and obscure key points. If everything seems important, nothing is important. Prioritize ruthlessly. Save supplementary information for optional resources.

Neglecting mobile design assumes learners always access content on desktops. In reality, significant learning happens on phones and tablets—especially for just-in-time and informal training. Research by the Pew Research Center indicates 91% of adults use mobile devices for online access. Content that doesn’t work well on mobile frustrates learners and limits when and where training can occur.

Ignoring accessibility excludes learners with disabilities and often violates legal requirements. Alternative text for images, sufficient color contrast, proper heading structures for screen readers, keyboard navigation for interactive elements. Accessible design benefits everyone, not just those with identified disabilities.

Failing to align assessments with objectives creates a disconnect. If objectives require application but assessments only test recall, you can’t verify learning occurred. Assessment design deserves as much attention as content design.

Best Tools for Creating Engaging eLearning

The right authoring tools affect both development efficiency and interactive capabilities. Understanding the landscape helps you choose for your needs and budget.

Articulate Rise 360 offers ease of use with good capabilities, especially for organizations using Learning Management Systems. Modular approach makes creating structured courses simple. Handles technical complexity so designers focus on learning design.

Adobe Captivate provides more advanced features for those willing to invest in learning curve—sophisticated simulations and software demonstrations. Strong for technical and compliance training requiring precise process documentation.

Articulate Storyline remains popular for flexibility and community resources. More customization than Rise while staying accessible to non-programmers.

Open-source options like H5P provide cost-effective alternatives. Integrates with many LMSs and offers variety of interaction types, though may need more technical setup than commercial tools.

How to Measure Learner Engagement

Measuring engagement requires looking beyond completion rates to understand how learners actually interact with and respond to content. Combine multiple data sources.

Behavioral metrics show what learners do: time on task, navigation patterns, interaction frequency, content access patterns. Reveal whether learners skim, review, or abandon. Significant variance across modules may indicate pacing issues.

Performance metrics from assessments reveal whether learning occurred. High completion with poor performance suggests rushing without comprehension. Look for patterns in incorrect answers that indicate specific content needing work.

Self-reported engagement through surveys captures the learner experience that data might miss. Learners can articulate relevance, motivation, anticipated application. Qualitative data complements quantitative.

Completion rates remain foundational despite limitations—they indicate perceived value. Compare across courses, populations, and time periods to identify trends. Research indicates that perceived autonomy in learning can increase completion rates by 20-25% (Noe et al., 2014, Industrial and Organizational Psychology).

Frequently Asked Questions

What makes eLearning content engaging?

Engaging eLearning combines relevance to learner needs, interactive elements requiring participation, clear organization, visual appeal, and immediate feedback. The key: learners feel they’re discovering useful information, not passively receiving it.

How long should an eLearning module be?

Optimal length depends on complexity and attention patterns, but research suggests 10-15 minutes maintains better engagement than longer segments. Breaking longer courses into shorter modules gives natural completion points and fits busy schedules.

February 24, 2026

February 24, 2026  11 Min

11 Min  No Comment

No Comment