Measuring the effectiveness of online training programs has become a critical competency for organizations investing in employee development. With companies spending billions annually on digital learning initiatives, understanding what works and what doesn’t directly impacts both workforce performance and budget allocation. The challenge lies in moving beyond simple completion metrics to capture genuine skill development, behavioral change, and measurable business outcomes.

This guide provides a comprehensive framework for evaluating online training programs, combining established evaluation models with practical measurement techniques you can implement immediately.

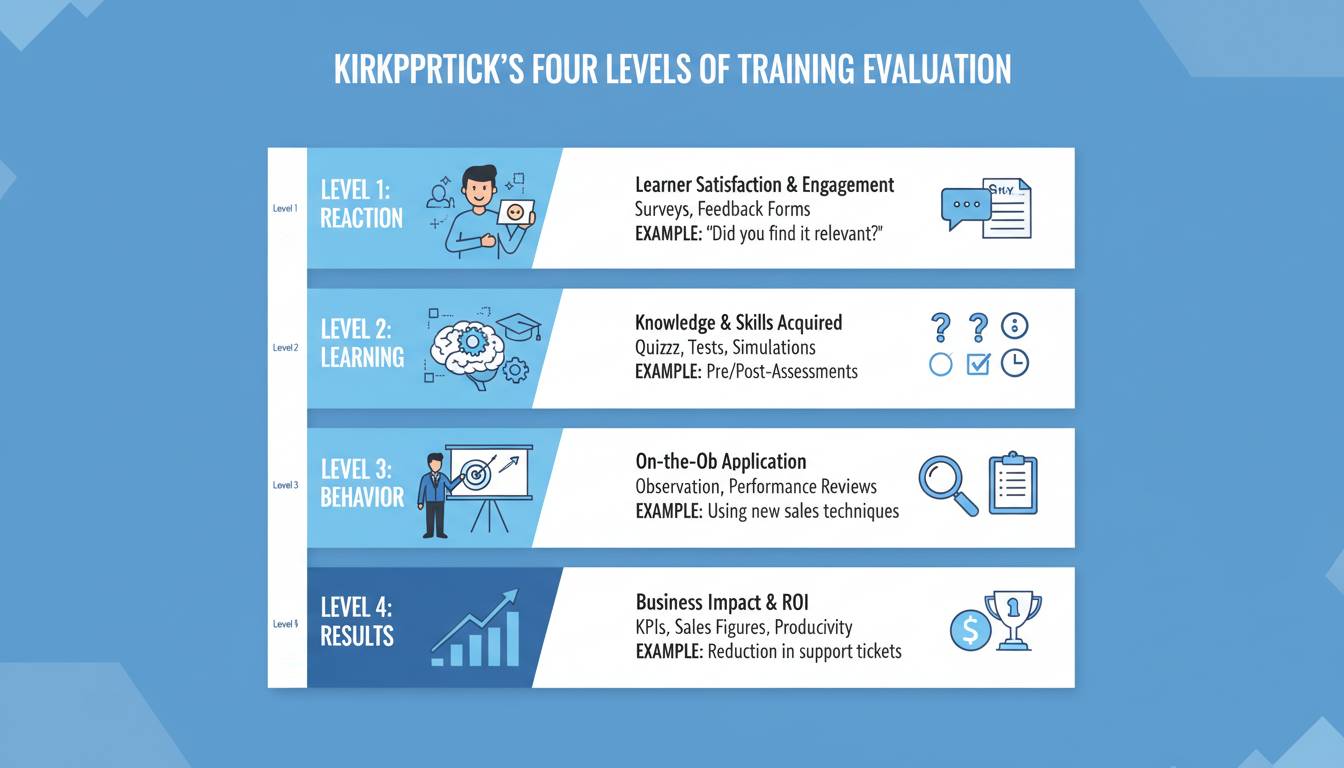

Understanding the Kirkpatrick Model

The Kirkpatrick Model remains the gold standard for training evaluation, providing a four-level hierarchy that measures different aspects of learning effectiveness.

Level 1: Reaction measures how participants perceived the training experience. Did they find it engaging, relevant, and valuable? This level captures immediate satisfaction through surveys and feedback forms. While often dismissed as superficial, reaction data reveals critical issues—if learners find content boring or irrelevant, behavior change becomes unlikely regardless of how well-designed the curriculum appears.

Level 2: Learning assesses knowledge acquisition and skill development through assessments, quizzes, practical exercises, and certification exams. This level answers whether participants actually absorbed the material. Pre- and post-training comparisons provide concrete evidence of knowledge gained, making this level essential for validating training content itself.

Level 3: Behavior examines whether learners apply new skills on the job. This level requires observation, manager feedback, and performance tracking over weeks or months following training. Behavior change represents the critical bridge between learning and business impact—without application, even excellent training produces no organizational value.

Level 4: Results connects training to business outcomes like productivity gains, revenue increases, error reduction, or customer satisfaction improvements. This level justifies training investments to stakeholders and leadership, though establishing direct causation requires careful methodology.

Most organizations停留在Level 1 metrics, tracking completion rates and satisfaction scores. Moving to Level 3 and 4 evaluation distinguishes exceptional training programs from mediocre ones.

Key Metrics and KPIs for Online Training

Effective measurement requires selecting metrics aligned with your training objectives and organizational goals.

Engagement Metrics

| Metric | What It Measures | Benchmark |

|---|---|---|

| Course Completion Rate | Percentage who finish | 60-70% |

| Time on Task | Actual learning time | Match content length |

| Interaction Frequency | Active participation | Varies by platform |

| Drop-off Points | Content problem areas | Identify >20% exit points |

Engagement metrics reveal participation patterns but don’t indicate learning outcomes. High completion rates with low assessment scores signal content misalignment or assessment issues requiring investigation.

Learning Metrics

Knowledge retention metrics determine whether training produces lasting understanding:

- Pre-Assessment Scores: Baseline knowledge before training

- Post-Assessment Scores: Immediate knowledge after training

- Knowledge Retention Rate: Scores at 30/60/90 days post-training

- Skill Demonstration Success: Practical application pass rates

Industry research indicates that without reinforcement, employees forget approximately 70% of new information within 24 hours and up to 90% within a week. This underscores the importance of spaced repetition and ongoing assessment beyond initial training completion.

Behavioral Metrics

Translating learning into behavioral change requires systematic observation:

- Manager Observations: Structured feedback on on-the-job performance

- 360-Degree Feedback: Input from peers, subordinates, and supervisors

- Performance Reviews: Pre and post-training comparison

- Process Compliance: Adherence to new procedures or protocols

- Error Rates: Changes in mistake frequency for trained tasks

Business Impact Metrics

The ultimate measure connects training to organizational outcomes:

- Productivity Output: Units produced, deals closed, tickets resolved

- Quality Metrics: Error rates, customer complaints, rework requirements

- Revenue Impact: Sales increases, cost savings, efficiency gains

- Employee Metrics: Retention rates, promotion velocity, engagement scores

Calculating Training ROI

Return on Investment calculation provides the financial justification for training programs, though methodology requires careful attention.

The ROI Formula:

ROI (%) = [(Financial Benefits - Training Costs) / Training Costs] × 100

Identifying Costs:

– Course development or licensing fees

– Platform technology costs

– Instructor/facilitator time

– Participant wages during training

– Administrative overhead

Identifying Benefits:

– Productivity improvements (hours saved × hourly rate)

– Error reduction (incidents prevented × cost per incident)

– Quality improvements (reduced waste or rework)

– Time savings (process acceleration value)

– Reduced turnover (replacement cost avoidance)

The challenge lies in isolating training’s contribution from other factors affecting business outcomes. Control groups, statistical analysis, and conservative estimates help establish reasonable causation claims. Many organizations report training ROI between 25-200%, though results vary significantly by program type and industry.

Measuring Engagement in Digital Learning

Digital training engagement extends beyond passive content consumption. Modern learning management systems provide granular data about learner behavior:

Activity Tracking:

– Login frequency and session duration

– Content access patterns and completion sequences

– Quiz attempts and score progression

– Discussion forum participation

– Resource downloads and revisits

Engagement Indicators:

– Module Progress: Sequential completion tracking

– Assignment Submission: Timely completion rates

– Peer Interaction: Collaboration and knowledge sharing

– Self-Directed Learning: Optional content exploration

Low engagement often indicates content relevance issues, technical barriers, or time constraints. Segmenting engagement data by department, role, or demographic reveals patterns requiring targeted intervention.

Tools and Technologies for Measurement

Modern learning analytics platforms provide sophisticated measurement capabilities:

Learning Management Systems (LMS):

Platforms like Cornerstone, SAP SuccessFactors, and Docebo offer built-in analytics dashboards tracking completion, scores, and engagement patterns. Most provide export capabilities for custom analysis.

Assessment Platforms:

Tools like Questionmark, ProProfs, or embedded LMS assessments enable sophisticated testing including adaptive quizzes, scenario-based assessments, and skills demonstrations.

Survey and Feedback Tools:

SurveyMonkey, Qualtrics, or integrated survey features capture reaction data and qualitative feedback essential for Level 1 evaluation.

Business Intelligence Integration:

Connecting learning data with HR analytics, productivity tools, and business systems enables Level 4 evaluation. API integrations between LMS platforms and BI tools like Tableau or Power BI facilitate comprehensive analysis.

Observation and Feedback Apps:

Mobile-friendly tools enable managers to provide structured behavioral feedback, creating data pipelines from the workplace to training analytics.

Common Mistakes in Training Measurement

Measuring Activity Instead of Outcomes: Completion rates and time spent indicate participation, not learning or impact. Organizations often confuse busywork with effective training.

Ignoring Long-Term Retention: Immediate post-training assessments show what learners can recall in the moment. Without follow-up testing, you’re看不到知识保留的真实情况.

Skipping Behavior-Level Evaluation: Assessing knowledge immediately after training assumes transfer to job performance. Without observing actual behavior change, this assumption remains unverified.

Failing to Establish Baselines: Measuring post-training performance without pre-training comparison provides no improvement evidence. Control groups strengthen causal claims.

Overlooking Qualitative Feedback: Numerical metrics miss context. Open-ended feedback reveals why metrics look as they do and identifies improvement opportunities numbers alone cannot surface.

Waiting Too Long to Evaluate: Evaluating immediately after training captures temporary knowledge. Assessment at 30, 60, and 90 days reveals actual retention and application.

Building a Measurement Framework

Effective measurement requires systematic planning:

Define Objectives First: What should participants know, do, or produce differently after training? Measurable objectives enable relevant metrics selection.

Choose Metrics Aligned with Objectives: Not every training program requires all four Kirkpatrick levels. Match measurement depth to training importance and investment.

Establish Data Collection Infrastructure: Determine how you’ll collect each metric before training launches. Retroactive measurement often proves impossible.

Set Realistic Baselines: Historical data, industry benchmarks, or pilot program results provide comparison points for evaluating improvement.

Create Reporting Cadence: Decide who needs what information when. Executive dashboards require different detail than facilitator feedback.

Iterate and Improve: Use measurement data to refine training content, delivery methods, and support systems. Measurement without action wastes the effort invested in data collection.

Conclusion

Measuring online training effectiveness requires moving beyond simple completion tracking to evaluate learning, behavior change, and business outcomes. The Kirkpatrick Model provides a proven framework, though full implementation requires sustained commitment and resources. Start with metrics aligned with your specific training objectives, build measurement infrastructure systematically, and use insights to continuously improve program quality. Organizations that master training measurement gain competitive advantage through evidence-based learning investments that genuinely improve workforce capability and business performance.

Frequently Asked Questions

How long does it take to see results from online training programs?

Behavior change typically becomes observable within 2-8 weeks post-training, depending on complexity. Business outcomes may take 3-12 months to manifest fully. Immediate reaction data (Level 1) is available immediately upon completion, while learning assessments (Level 2) should occur within days. For accurate ROI measurement, wait at least 6 months to allow sufficient time for behavioral application and business impact to materialize.

What is a good completion rate for online training?

Industry benchmarks suggest 60-70% completion rates for mandatory compliance training and 40-60% for optional professional development. However, completion rates vary significantly by training type, learner motivation, and platform usability. Rather than comparing to benchmarks, focus on understanding why completion rates are high or low through qualitative feedback and engagement analysis.

How do you measure knowledge retention after training?

Knowledge retention measurement requires assessment at multiple intervals post-training—at completion, then at 7 days, 30 days, and 90 days. Compare scores across these intervals to identify knowledge decay patterns. Spaced repetition systems and refresher modules help combat forgetting curves. Assessment formats should include practical application scenarios, not just recall questions.

Can you measure ROI for soft skills training?

Yes, though soft skills ROI measurement presents greater challenges than technical training. Focus on observable behavioral indicators like communication quality, teamwork effectiveness, and leadership behaviors. Use 360-degree feedback, manager observations, and customer satisfaction scores as proxies for intangible skills. While direct revenue attribution proves difficult, you can measure productivity improvements and conflict reduction to establish value.

What tools are best for tracking training effectiveness?

The best tools depend on your existing technology stack and measurement needs. Leading Learning Management Systems (Cornerstone, Docebo, SAP SuccessFactors) provide built-in analytics. For advanced analysis, integrate LMS data with business intelligence tools (Tableau, Power BI). Survey platforms (Qualtrics, SurveyMonkey) capture qualitative feedback. Choose tools that integrate with your HRIS and productivity systems to enable comprehensive outcome analysis.

March 21, 2026

March 21, 2026  9 Min

9 Min  No Comment

No Comment